Ridge GLM Gamma model

GLMGamma.Rmd

library(rvfl)

glmGamma <- function(formula, ...) {

stats::glm(formula = formula,

family = Gamma(link = "log"),

...)

}Example 1: MPG Prediction (mtcars dataset)

Fit models

# Fit regular linear model

start <- proc.time()[3]

lm_model <- lm(mpg ~ ., data = train_data)

print(proc.time()[3] - start)## elapsed

## 0.014##

## Call:

## lm(formula = mpg ~ ., data = train_data)

##

## Residuals:

## Min 1Q Median 3Q Max

## -3.5211 -0.9792 -0.0324 1.1808 4.9814

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) -5.054416 25.456900 -0.199 0.8455

## cyl 0.695392 1.396506 0.498 0.6262

## disp 0.005254 0.017342 0.303 0.7664

## hp -0.007610 0.027723 -0.274 0.7877

## drat 4.128157 2.724353 1.515 0.1520

## wt -1.621396 2.139071 -0.758 0.4610

## qsec 0.064356 0.932144 0.069 0.9459

## vs 0.138716 3.421183 0.041 0.9682

## am -0.498476 2.956568 -0.169 0.8685

## gear 4.402648 2.287816 1.924 0.0749 .

## carb -1.999389 1.299580 -1.538 0.1462

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 2.464 on 14 degrees of freedom

## Multiple R-squared: 0.8938, Adjusted R-squared: 0.818

## F-statistic: 11.79 on 10 and 14 DF, p-value: 3.4e-05## 2.5 % 97.5 %

## (Intercept) -59.65403559 49.54520296

## cyl -2.29981561 3.69060001

## disp -0.03194096 0.04244882

## hp -0.06707095 0.05185084

## drat -1.71500030 9.97131342

## wt -6.20924769 2.96645550

## qsec -1.93489537 2.06360651

## vs -7.19899241 7.47642359

## am -6.83968216 5.84273112

## gear -0.50422869 9.30952400

## carb -4.78671119 0.78793282

# Fit calibrated model

start <- proc.time()[3]

ridge_model <- rvfl::calibmodel(lambda=NULL, positive_response = TRUE, x = as.matrix(train_data[,-1]), y = train_data$mpg, engine = glmGamma)

print(proc.time()[3] - start)## elapsed

## 0.04##

## Call:

## stats::glm(formula = formula, family = Gamma(link = "log"), data = ..1)

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 2.94153 0.04284 68.662 6.81e-06 ***

## cyl -3.18015 1.95452 -1.627 0.202

## disp 0.95504 0.71872 1.329 0.276

## hp -1.49915 0.98943 -1.515 0.227

## drat 0.97134 0.45344 2.142 0.122

## wt 2.15858 1.28739 1.677 0.192

## qsec -1.32771 0.90071 -1.474 0.237

## vs -2.55594 1.39220 -1.836 0.164

## am -2.50217 1.43916 -1.739 0.180

## gear 2.79639 1.51668 1.844 0.162

## carb -1.97406 1.03051 -1.916 0.151

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for Gamma family taken to be 0.01371843)

##

## Null deviance: 1.156515 on 13 degrees of freedom

## Residual deviance: 0.041053 on 3 degrees of freedom

## AIC: 65.641

##

## Number of Fisher Scoring iterations: 4## Waiting for profiling to be done...## 2.5 % 97.5 %

## (Intercept) 2.85858732 3.02673592

## cyl -7.04397492 0.69526340

## disp -0.48201838 2.37848553

## hp -3.45155751 0.46247950

## drat 0.07625411 1.86598970

## wt -0.37817990 4.69952637

## qsec -3.11775220 0.45411590

## vs -5.29353223 0.19757977

## am -5.33932399 0.34822089

## gear -0.20518286 5.78249167

## carb -4.00729579 0.05956867

#print(simulate(ridge_model, newdata = test_data))Make predictions

lm_pred <- predict(lm_model, newdata = test_data, interval = "prediction")

ridge_pred <- predict(ridge_model, newdata = as.matrix(test_data), method="surrogate")## Registered S3 method overwritten by 'quantmod':

## method from

## as.zoo.data.frame zooCompare predictions

results <- data.frame(

Actual = mtcars[-train_idx, ]$mpg,

LM_Pred = lm_pred[,"fit"],

LM_Lower = lm_pred[,"lwr"],

LM_Upper = lm_pred[,"upr"],

Ridge_Pred = ridge_pred[,"fit"],

Ridge_Lower = ridge_pred[,"lwr"],

Ridge_Upper = ridge_pred[,"upr"]

)

# Print results

print("Prediction Intervals Comparison:")## [1] "Prediction Intervals Comparison:"## Actual LM_Pred LM_Lower LM_Upper Ridge_Pred Ridge_Lower

## Valiant 18.1 17.93324 10.149847 25.71663 2.983062 0.7303993

## Merc 280C 17.8 20.63530 13.636618 27.63398 28.181406 6.9001851

## Toyota Corolla 33.9 28.58373 22.379666 34.78779 29.560768 7.2379202

## Camaro Z28 13.3 15.85710 8.140858 23.57335 21.872860 3.2783652

## Porsche 914-2 26.0 31.07535 18.988702 43.16201 161.398742 39.5182970

## Ford Pantera L 15.8 27.07516 14.930150 39.22016 51.821216 12.6883654

## Ridge_Upper

## Valiant 13.50603

## Merc 280C 127.59334

## Toyota Corolla 133.83850

## Camaro Z28 60.62121

## Porsche 914-2 730.74438

## Ford Pantera L 234.62427

# Calculate coverage and Winkler scores

lm_coverage <- mean(mtcars[-train_idx, ]$mpg >= results$LM_Lower &

mtcars[-train_idx, ]$mpg <= results$LM_Upper)

ridge_coverage <- mean(mtcars[-train_idx, ]$mpg >= results$Ridge_Lower &

mtcars[-train_idx, ]$mpg <= results$Ridge_Upper)

lm_winkler <- misc::winkler_score(mtcars[-train_idx, ]$mpg, results$LM_Lower, results$LM_Upper)

ridge_winkler <- misc::winkler_score(mtcars[-train_idx, ]$mpg, results$Ridge_Lower, results$Ridge_Upper)

print(sprintf("\nPrediction interval metrics:"))## [1] "\nPrediction interval metrics:"

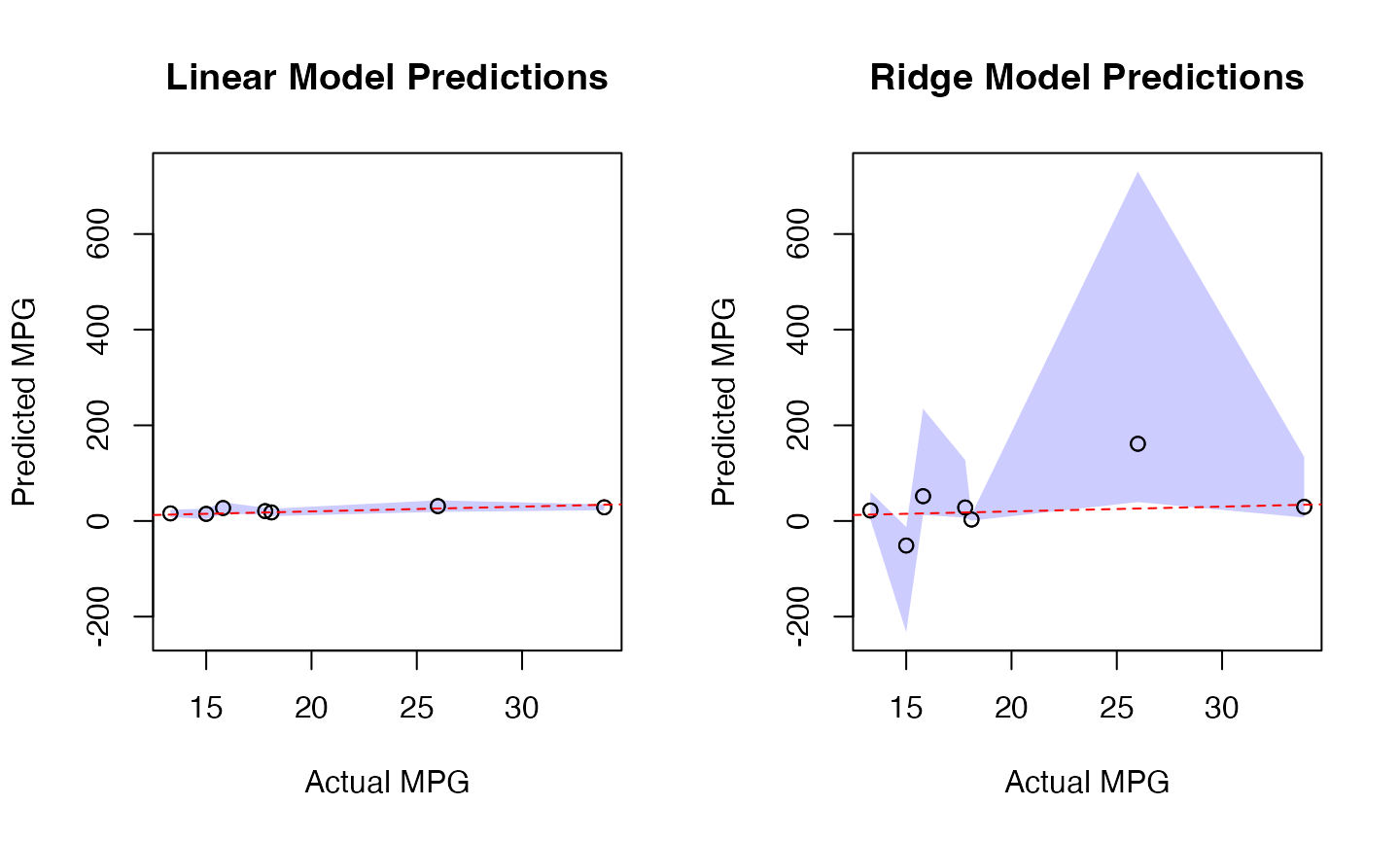

print(sprintf("Linear Model: %.1f%% coverage, %.3f Winkler score",

100 * lm_coverage, mean(lm_winkler)))## [1] "Linear Model: 100.0% coverage, 18.226 Winkler score"

print(sprintf("Calibrated Model: %.1f%% coverage, %.3f Winkler score",

100 * ridge_coverage, mean(ridge_winkler)))## [1] "Calibrated Model: 57.1% coverage, 468.268 Winkler score"

# Set common y-axis limits for both plots

y_limits <- range(c(results$LM_Lower, results$LM_Upper,

results$Ridge_Lower, results$Ridge_Upper))

# Plot prediction intervals

par(mfrow=c(1,2))

# Linear Model Plot

plot(results$Actual, results$LM_Pred,

main="Linear Model Predictions",

xlab="Actual MPG", ylab="Predicted MPG",

ylim=y_limits)

# Add shaded prediction intervals

x_ordered <- order(results$Actual)

polygon(c(results$Actual[x_ordered], rev(results$Actual[x_ordered])),

c(results$LM_Lower[x_ordered], rev(results$LM_Upper[x_ordered])),

col=rgb(0, 0, 1, 0.2), border=NA)

points(results$Actual, results$LM_Pred) # Replot points over shading

abline(0, 1, col="red", lty=2) # Add diagonal line

# Ridge Model Plot

plot(results$Actual, results$Ridge_Pred,

main="Ridge Model Predictions",

xlab="Actual MPG", ylab="Predicted MPG",

ylim=y_limits)

# Add shaded prediction intervals

polygon(c(results$Actual[x_ordered], rev(results$Actual[x_ordered])),

c(results$Ridge_Lower[x_ordered], rev(results$Ridge_Upper[x_ordered])),

col=rgb(0, 0, 1, 0.2), border=NA)

points(results$Actual, results$Ridge_Pred) # Replot points over shading

abline(0, 1, col="red", lty=2) # Add diagonal line

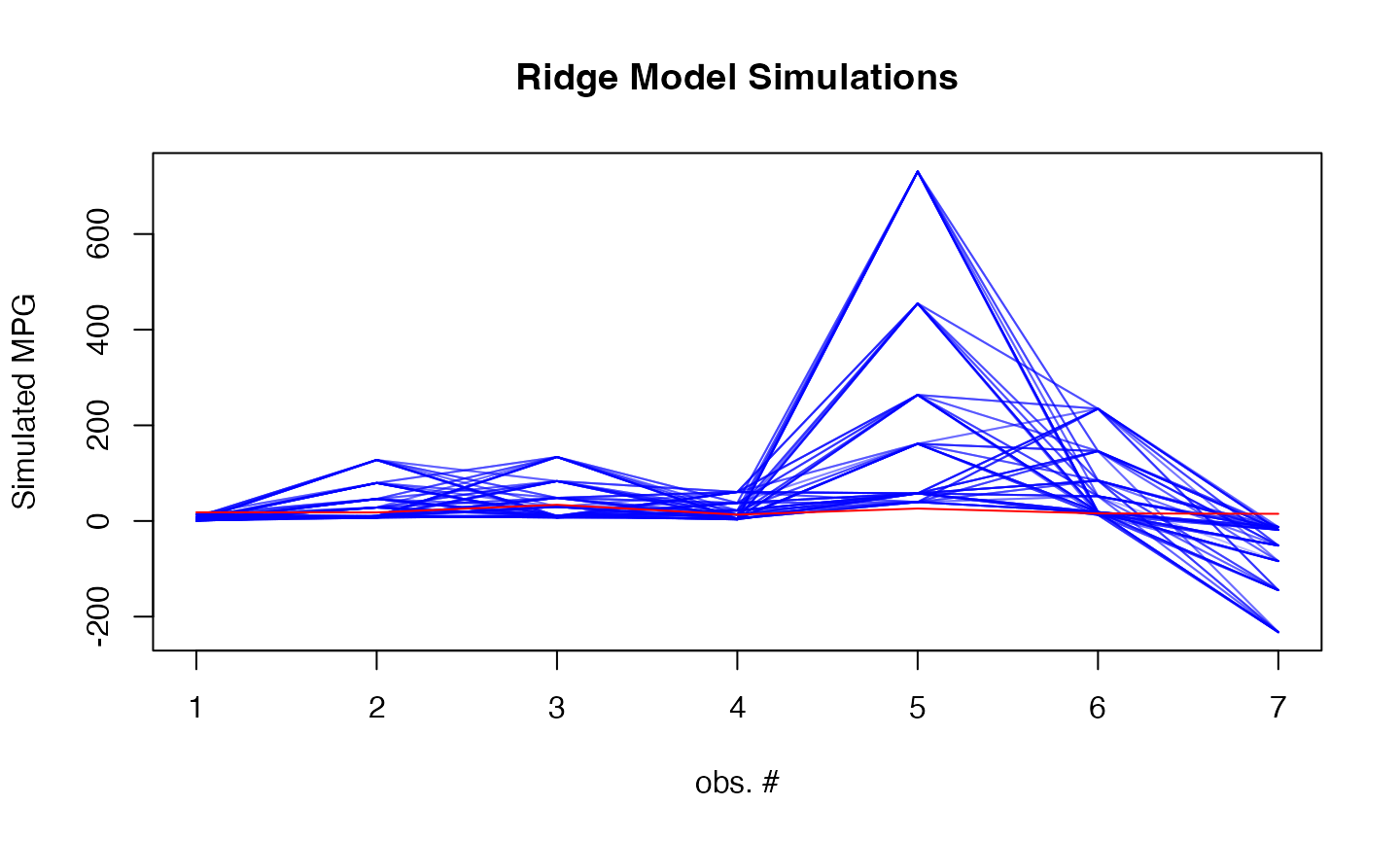

# Add simulation plot

par(mfrow=c(1,1))

# Generate 100 simulations

sims <- simulate(ridge_model, newdata = as.matrix(test_data), nsim = 500, method="surrogate")

# Plot simulations

matplot(sims, type = "l",

col = rgb(0, 0, 1, 0.1), lty = 1,

xlab = "obs. #", ylab = "Simulated MPG",

main = "Ridge Model Simulations")

lines(mtcars[-train_idx, ]$mpg, col = "red")

Example 2: Boston Housing Price Prediction

Fit models

# Fit regular linear model

start <- proc.time()[3]

lm_model <- lm(medv ~ ., data = train_data)

print(proc.time()[3] - start)## elapsed

## 0.003##

## Call:

## lm(formula = medv ~ ., data = train_data)

##

## Residuals:

## Min 1Q Median 3Q Max

## -15.220 -2.757 -0.494 1.863 26.961

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 35.832339 5.763210 6.217 1.30e-09 ***

## crim -0.095389 0.034717 -2.748 0.006282 **

## zn 0.042689 0.016086 2.654 0.008283 **

## indus -0.033013 0.073521 -0.449 0.653657

## chas 2.506064 0.939731 2.667 0.007977 **

## nox -17.521010 4.237379 -4.135 4.35e-05 ***

## rm 3.966727 0.477640 8.305 1.66e-15 ***

## age 0.006479 0.014922 0.434 0.664410

## dis -1.463187 0.232348 -6.297 8.17e-10 ***

## rad 0.253984 0.075379 3.369 0.000828 ***

## tax -0.009853 0.004350 -2.265 0.024068 *

## ptratio -1.002914 0.147016 -6.822 3.44e-11 ***

## black 0.008723 0.002984 2.923 0.003664 **

## lstat -0.501984 0.057704 -8.699 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 4.835 on 390 degrees of freedom

## Multiple R-squared: 0.7403, Adjusted R-squared: 0.7316

## F-statistic: 85.51 on 13 and 390 DF, p-value: < 2.2e-16## 2.5 % 97.5 %

## (Intercept) 24.50149090 47.16318623

## crim -0.16364441 -0.02713269

## zn 0.01106383 0.07431451

## indus -0.17755967 0.11153316

## chas 0.65849254 4.35363615

## nox -25.85197459 -9.19004505

## rm 3.02765548 4.90579898

## age -0.02285933 0.03581667

## dis -1.91999833 -1.00637601

## rad 0.10578379 0.40218383

## tax -0.01840515 -0.00129986

## ptratio -1.29195676 -0.71387117

## black 0.00285660 0.01458927

## lstat -0.61543411 -0.38853422

# Fit calibrated model

start <- proc.time()[3]

ridge_model <- rvfl::calibmodel(lambda=NULL, positive_response = TRUE, x = as.matrix(train_data[,-14]), y = train_data$medv, engine = glmGamma)

print(proc.time()[3] - start)## elapsed

## 0.012##

## Call:

## stats::glm(formula = formula, family = Gamma(link = "log"), data = ..1)

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 3.04570 0.01651 184.515 < 2e-16 ***

## crim -0.08451 0.02335 -3.619 0.000379 ***

## zn 0.02528 0.02391 1.057 0.291789

## indus 0.02593 0.03775 0.687 0.492982

## chas 0.02093 0.01647 1.270 0.205517

## nox -0.11954 0.03312 -3.610 0.000393 ***

## rm 0.05966 0.02250 2.652 0.008685 **

## age 0.05202 0.02849 1.826 0.069448 .

## dis -0.07763 0.03261 -2.381 0.018277 *

## rad 0.10462 0.05120 2.043 0.042420 *

## tax -0.07266 0.06061 -1.199 0.232108

## ptratio -0.07840 0.02199 -3.566 0.000459 ***

## black 0.02940 0.02044 1.438 0.151964

## lstat -0.22002 0.02748 -8.007 1.17e-13 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for Gamma family taken to be 0.05202511)

##

## Null deviance: 32.7718 on 202 degrees of freedom

## Residual deviance: 8.7935 on 189 degrees of freedom

## AIC: 1199.1

##

## Number of Fisher Scoring iterations: 8

#print(confint(ridge_model$model))

#print(simulate(ridge_model, newdata = test_data))Make predictions and compare

lm_pred <- predict(lm_model, newdata = test_data, interval = "prediction")

ridge_pred <- predict(ridge_model, newdata = as.matrix(test_data), method="surrogate")

results <- data.frame(

Actual = Boston[-train_idx, ]$medv,

LM_Pred = lm_pred[,"fit"],

LM_Lower = lm_pred[,"lwr"],

LM_Upper = lm_pred[,"upr"],

Ridge_Pred = ridge_pred[,"fit"],

Ridge_Lower = ridge_pred[,"lwr"],

Ridge_Upper = ridge_pred[,"upr"]

)

# Print results

print("Prediction Intervals Comparison:")## [1] "Prediction Intervals Comparison:"## Actual LM_Pred LM_Lower LM_Upper Ridge_Pred Ridge_Lower Ridge_Upper

## 1 24.0 30.57209 20.958399 40.18579 21.38883 12.331627 40.40661

## 3 34.7 30.68339 21.107377 40.25940 22.07985 12.528040 40.61359

## 4 33.4 28.70511 19.107688 38.30253 22.36250 11.501108 43.84226

## 18 17.5 17.06191 7.487523 26.63630 19.42386 9.791957 37.56123

## 21 13.6 12.85420 3.239688 22.46872 18.03647 11.587452 34.46784

## 24 14.5 14.14956 4.535627 23.76348 18.28240 9.701505 32.88123

# Calculate coverage and Winkler scores

lm_coverage <- mean(Boston[-train_idx, ]$medv >= results$LM_Lower &

Boston[-train_idx, ]$medv <= results$LM_Upper)

ridge_coverage <- mean(Boston[-train_idx, ]$medv >= results$Ridge_Lower &

Boston[-train_idx, ]$medv <= results$Ridge_Upper)

lm_winkler <- misc::winkler_score(Boston[-train_idx, ]$medv, results$LM_Lower, results$LM_Upper)

ridge_winkler <- misc::winkler_score(Boston[-train_idx, ]$medv, results$Ridge_Lower, results$Ridge_Upper)

print(sprintf("\nPrediction interval metrics:"))## [1] "\nPrediction interval metrics:"

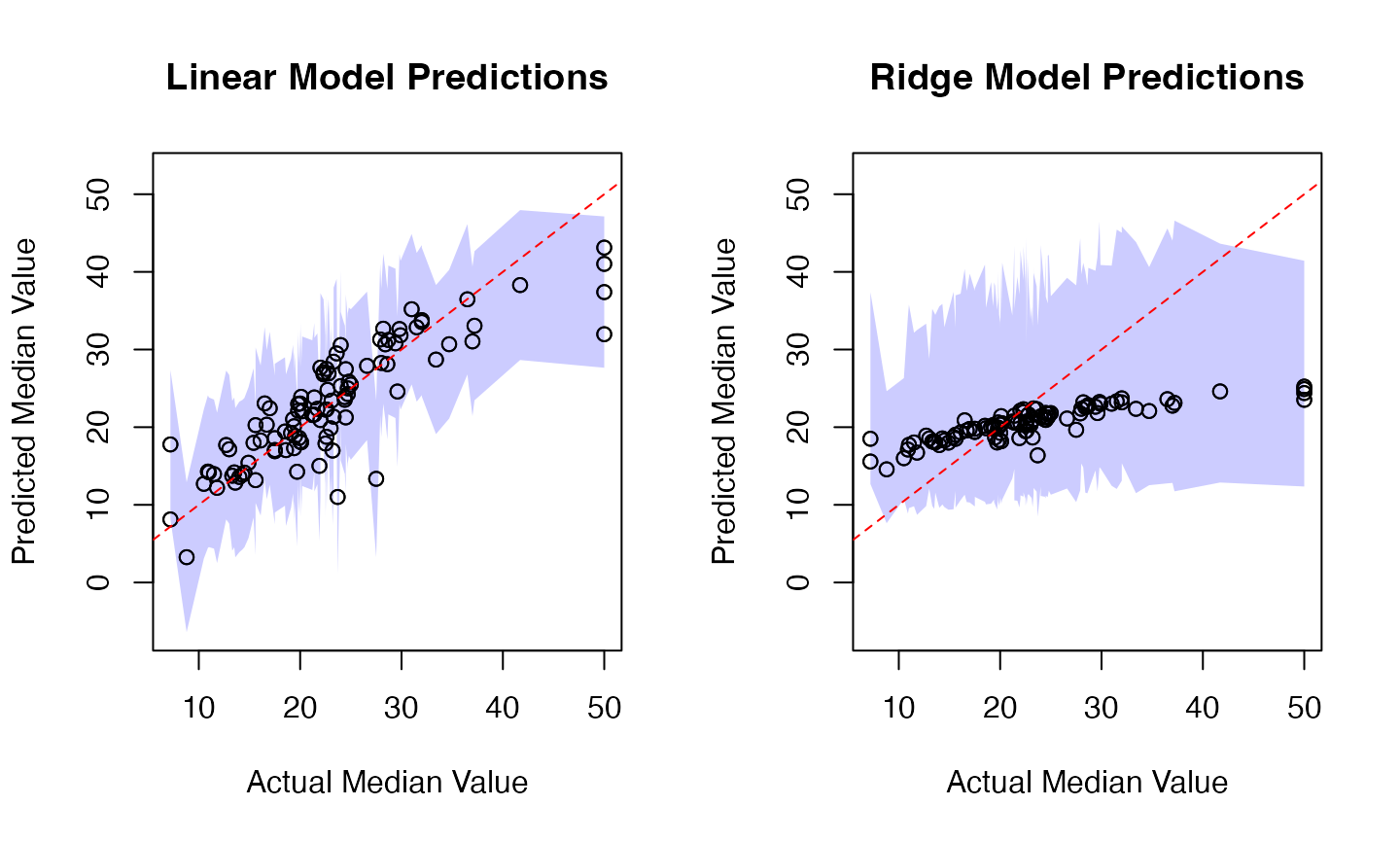

print(sprintf("Linear Model: %.1f%% coverage, %.3f Winkler score",

100 * lm_coverage, mean(lm_winkler)))## [1] "Linear Model: 95.1% coverage, 26.711 Winkler score"

print(sprintf("Calibrated Model: %.1f%% coverage, %.3f Winkler score",

100 * ridge_coverage, mean(ridge_winkler)))## [1] "Calibrated Model: 94.1% coverage, 37.422 Winkler score"

# Visualization

# Set common y-axis limits for both plots

y_limits <- range(c(results$LM_Lower, results$LM_Upper,

results$Ridge_Lower, results$Ridge_Upper))

par(mfrow=c(1,2))

# Linear Model Plot

plot(results$Actual, results$LM_Pred,

main="Linear Model Predictions",

xlab="Actual Median Value", ylab="Predicted Median Value",

ylim=y_limits)

x_ordered <- order(results$Actual)

polygon(c(results$Actual[x_ordered], rev(results$Actual[x_ordered])),

c(results$LM_Lower[x_ordered], rev(results$LM_Upper[x_ordered])),

col=rgb(0, 0, 1, 0.2), border=NA)

points(results$Actual, results$LM_Pred)

abline(0, 1, col="red", lty=2)

# Ridge Model Plot

plot(results$Actual, results$Ridge_Pred,

main="Ridge Model Predictions",

xlab="Actual Median Value", ylab="Predicted Median Value",

ylim=y_limits)

polygon(c(results$Actual[x_ordered], rev(results$Actual[x_ordered])),

c(results$Ridge_Lower[x_ordered], rev(results$Ridge_Upper[x_ordered])),

col=rgb(0, 0, 1, 0.2), border=NA)

points(results$Actual, results$Ridge_Pred)

abline(0, 1, col="red", lty=2)

# Add simulation plot

par(mfrow=c(1,1))

sims <- simulate(ridge_model, newdata = as.matrix(test_data), nsim = 500, method="surrogate")

matplot(sims, type = "l",

col = rgb(0, 0, 1, 0.1), lty = 1,

xlab = "obs. #", ylab = "Simulated Median Value",

main = "Ridge Model Simulations")

lines(Boston[-train_idx, ]$medv, col = "red")

Example 3: Car Price Analysis (Cars93 dataset)

Load and prepare data

data(Cars93, package = "MASS")

# Remove rows with missing values

Cars93 <- na.omit(Cars93)

# Select numeric predictors and price as response

predictors <- c("MPG.city", "MPG.highway", "EngineSize", "Horsepower",

"RPM", "Rev.per.mile", "Fuel.tank.capacity", "Length",

"Wheelbase", "Width", "Turn.circle", "Weight")

car_data <- Cars93[, c(predictors, "Price")]

set.seed(1243)

train_idx <- sample(nrow(car_data), size = floor(0.8 * nrow(car_data)))

train_data <- car_data[train_idx, ]

test_data <- car_data[-train_idx, -which(names(car_data) == "Price")]Fit models

# Fit regular linear model

start <- proc.time()[3]

lm_model <- lm(Price ~ ., data = train_data)

print(proc.time()[3] - start)## elapsed

## 0.004##

## Call:

## lm(formula = Price ~ ., data = train_data)

##

## Residuals:

## Min 1Q Median 3Q Max

## -8.9444 -3.4879 -0.0823 2.5740 10.7036

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 6.0285088 31.8235292 0.189 0.8505

## MPG.city -0.3069383 0.4798163 -0.640 0.5252

## MPG.highway -0.0041568 0.4591254 -0.009 0.9928

## EngineSize 2.4810783 2.4779636 1.001 0.3213

## Horsepower 0.1016741 0.0446071 2.279 0.0268 *

## RPM 0.0001602 0.0023006 0.070 0.9448

## Rev.per.mile 0.0049762 0.0026868 1.852 0.0697 .

## Fuel.tank.capacity -0.1866149 0.5198553 -0.359 0.7211

## Length 0.0203242 0.1333518 0.152 0.8795

## Wheelbase 0.4888949 0.2655782 1.841 0.0713 .

## Width -0.8957689 0.4446575 -2.015 0.0491 *

## Turn.circle -0.3835124 0.3579624 -1.071 0.2889

## Weight 0.0041302 0.0059593 0.693 0.4914

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 4.731 on 52 degrees of freedom

## Multiple R-squared: 0.7886, Adjusted R-squared: 0.7398

## F-statistic: 16.16 on 12 and 52 DF, p-value: 1.548e-13## 2.5 % 97.5 %

## (Intercept) -5.783007e+01 69.887091986

## MPG.city -1.269760e+00 0.655883438

## MPG.highway -9.254594e-01 0.917145804

## EngineSize -2.491319e+00 7.453475962

## Horsepower 1.216348e-02 0.191184768

## RPM -4.456410e-03 0.004776746

## Rev.per.mile -4.152533e-04 0.010367616

## Fuel.tank.capacity -1.229781e+00 0.856550981

## Length -2.472657e-01 0.287914102

## Wheelbase -4.402679e-02 1.021816593

## Width -1.788039e+00 -0.003498354

## Turn.circle -1.101817e+00 0.334791684

## Weight -7.828121e-03 0.016088453

# Fit calibrated model

start <- proc.time()[3]

ridge_model <- rvfl::calibmodel(lambda=NULL, positive_response = TRUE, x = as.matrix(train_data[,-which(names(train_data) == "Price")]),

y = train_data$Price, engine = glmGamma)

print(proc.time()[3] - start)## elapsed

## 0.017##

## Call:

## stats::glm(formula = formula, family = Gamma(link = "log"), data = ..1)

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 2.93417 0.05044 58.175 <2e-16 ***

## MPG.city -0.14493 0.19497 -0.743 0.466

## MPG.highway -0.01705 0.18944 -0.090 0.929

## EngineSize 0.16669 0.21278 0.783 0.443

## Horsepower 0.01388 0.19675 0.071 0.944

## RPM 0.10871 0.11009 0.988 0.335

## Rev.per.mile 0.14074 0.10078 1.396 0.178

## Fuel.tank.capacity -0.02171 0.14535 -0.149 0.883

## Length -0.11652 0.23525 -0.495 0.626

## Wheelbase 0.20613 0.20229 1.019 0.320

## Width -0.12633 0.13352 -0.946 0.355

## Turn.circle 0.01264 0.10436 0.121 0.905

## Weight 0.25993 0.25013 1.039 0.311

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for Gamma family taken to be 0.06327619)

##

## Null deviance: 6.6564 on 32 degrees of freedom

## Residual deviance: 1.2465 on 20 degrees of freedom

## AIC: 201.64

##

## Number of Fisher Scoring iterations: 8

#print(confint(ridge_model$model))

#print(simulate(ridge_model, newdata = as.matrix(test_data)))Make predictions and compare

lm_pred <- predict(lm_model, newdata = test_data, interval = "prediction")

ridge_pred <- predict(ridge_model, newdata = as.matrix(test_data), method="surrogate")

results <- data.frame(

Actual = car_data[-train_idx, "Price"],

LM_Pred = lm_pred[,"fit"],

LM_Lower = lm_pred[,"lwr"],

LM_Upper = lm_pred[,"upr"],

Ridge_Pred = ridge_pred[,"fit"],

Ridge_Lower = ridge_pred[,"lwr"],

Ridge_Upper = ridge_pred[,"upr"]

)

# Print results

print("Prediction Intervals Comparison:")## [1] "Prediction Intervals Comparison:"

print(results)## Actual LM_Pred LM_Lower LM_Upper Ridge_Pred Ridge_Lower Ridge_Upper

## 7 20.8 22.284761 12.3951390 32.17438 16.50351 10.208254 37.26172

## 12 13.4 14.354853 3.3289673 25.38074 14.63415 8.468277 30.91053

## 15 15.9 17.852333 7.2347759 28.46989 17.18962 9.947039 36.30824

## 21 15.8 19.516709 9.4219263 29.61149 16.94156 9.803492 35.78427

## 23 9.2 14.340649 3.5068316 25.17447 16.95578 8.585541 31.33856

## 29 12.2 9.028432 -1.4360619 19.49293 15.72429 7.961978 29.06246

## 30 19.3 29.775630 19.1961282 40.35513 19.23503 11.130644 40.62859

## 31 7.4 6.013838 -5.5354656 17.56314 12.26388 7.333110 26.76700

## 32 10.1 14.806645 4.1551453 25.45814 16.00235 9.455772 33.80046

## 42 12.1 8.704607 -3.6984146 21.10763 12.57599 7.277288 26.56324

## 54 11.6 10.002970 -0.3839704 20.38991 13.59705 7.868144 28.71995

## 59 61.9 34.711053 24.1509123 45.27119 20.32333 11.760409 42.92733

## 72 14.4 9.110664 -1.1222765 19.34360 14.30707 8.279003 30.21965

## 73 9.0 11.005860 0.0448295 21.96689 14.09788 8.157953 29.77780

## 76 18.5 26.775186 16.6537717 36.89660 16.92855 10.471163 38.22138

## 91 23.3 24.122428 12.0542302 36.19063 17.00590 10.519009 38.39602

## 92 22.7 18.531114 8.4532906 28.60894 16.71080 9.669962 35.29687

# Calculate coverage and Winkler scores

lm_coverage <- mean(car_data[-train_idx, "Price"] >= results$LM_Lower &

car_data[-train_idx, "Price"] <= results$LM_Upper)

ridge_coverage <- mean(car_data[-train_idx, "Price"] >= results$Ridge_Lower &

car_data[-train_idx, "Price"] <= results$Ridge_Upper)

lm_winkler <- misc::winkler_score(car_data[-train_idx, "Price"], results$LM_Lower, results$LM_Upper)

ridge_winkler <- misc::winkler_score(car_data[-train_idx, "Price"], results$Ridge_Lower, results$Ridge_Upper)

print(sprintf("\nPrediction interval metrics:"))## [1] "\nPrediction interval metrics:"

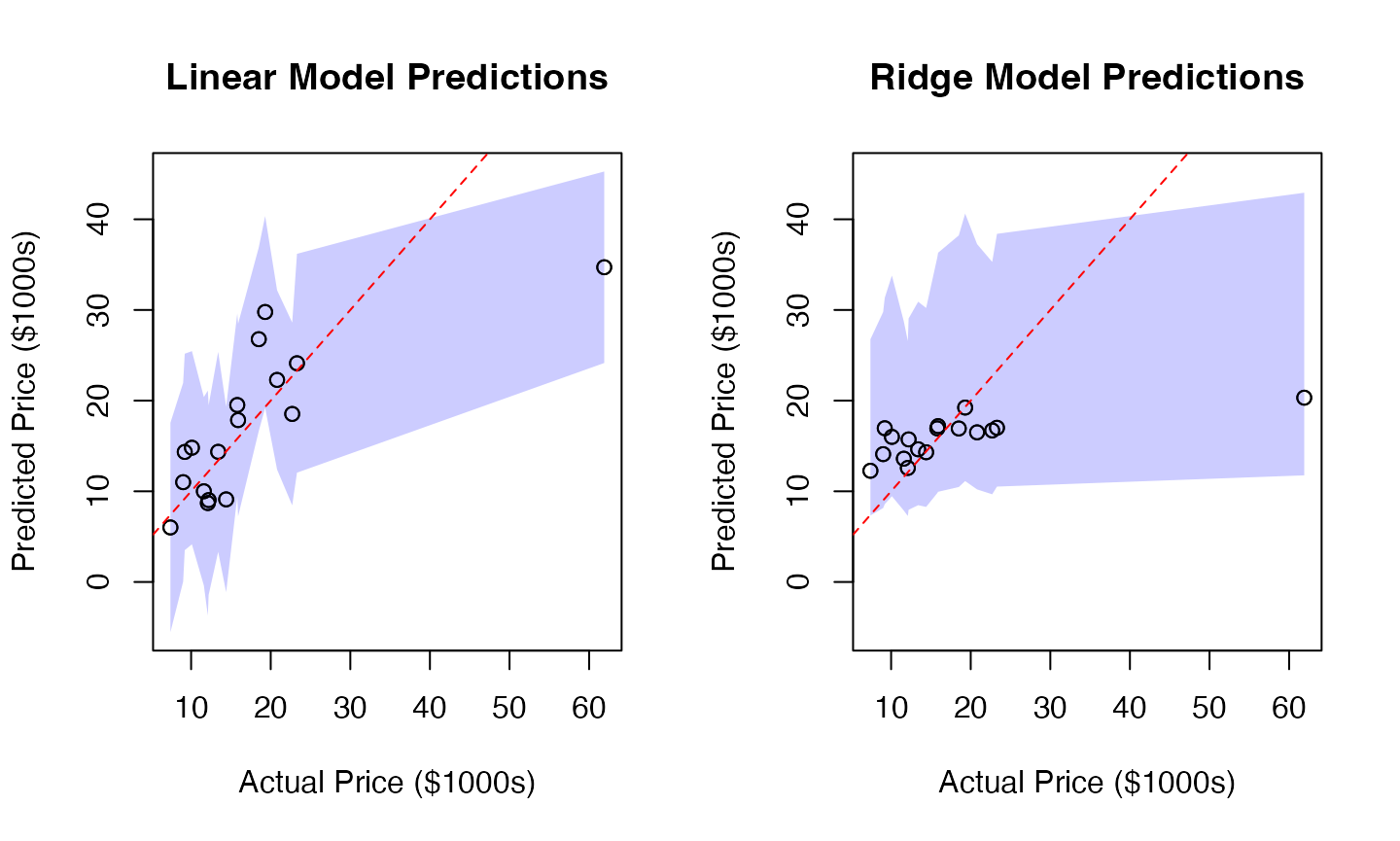

print(sprintf("Linear Model: %.1f%% coverage, %.3f Winkler score",

100 * lm_coverage, mean(lm_winkler)))## [1] "Linear Model: 94.1% coverage, 60.599 Winkler score"

print(sprintf("Calibrated Model: %.1f%% coverage, %.3f Winkler score",

100 * ridge_coverage, mean(ridge_winkler)))## [1] "Calibrated Model: 94.1% coverage, 69.058 Winkler score"

# Visualization

# Set common y-axis limits for both plots

y_limits <- range(c(results$LM_Lower, results$LM_Upper,

results$Ridge_Lower, results$Ridge_Upper))

par(mfrow=c(1,2))

# Linear Model Plot

plot(results$Actual, results$LM_Pred,

main="Linear Model Predictions",

xlab="Actual Price ($1000s)", ylab="Predicted Price ($1000s)",

ylim=y_limits)

x_ordered <- order(results$Actual)

polygon(c(results$Actual[x_ordered], rev(results$Actual[x_ordered])),

c(results$LM_Lower[x_ordered], rev(results$LM_Upper[x_ordered])),

col=rgb(0, 0, 1, 0.2), border=NA)

points(results$Actual, results$LM_Pred)

abline(0, 1, col="red", lty=2)

# Ridge Model Plot

plot(results$Actual, results$Ridge_Pred,

main="Ridge Model Predictions",

xlab="Actual Price ($1000s)", ylab="Predicted Price ($1000s)",

ylim=y_limits)

polygon(c(results$Actual[x_ordered], rev(results$Actual[x_ordered])),

c(results$Ridge_Lower[x_ordered], rev(results$Ridge_Upper[x_ordered])),

col=rgb(0, 0, 1, 0.2), border=NA)

points(results$Actual, results$Ridge_Pred)

abline(0, 1, col="red", lty=2)

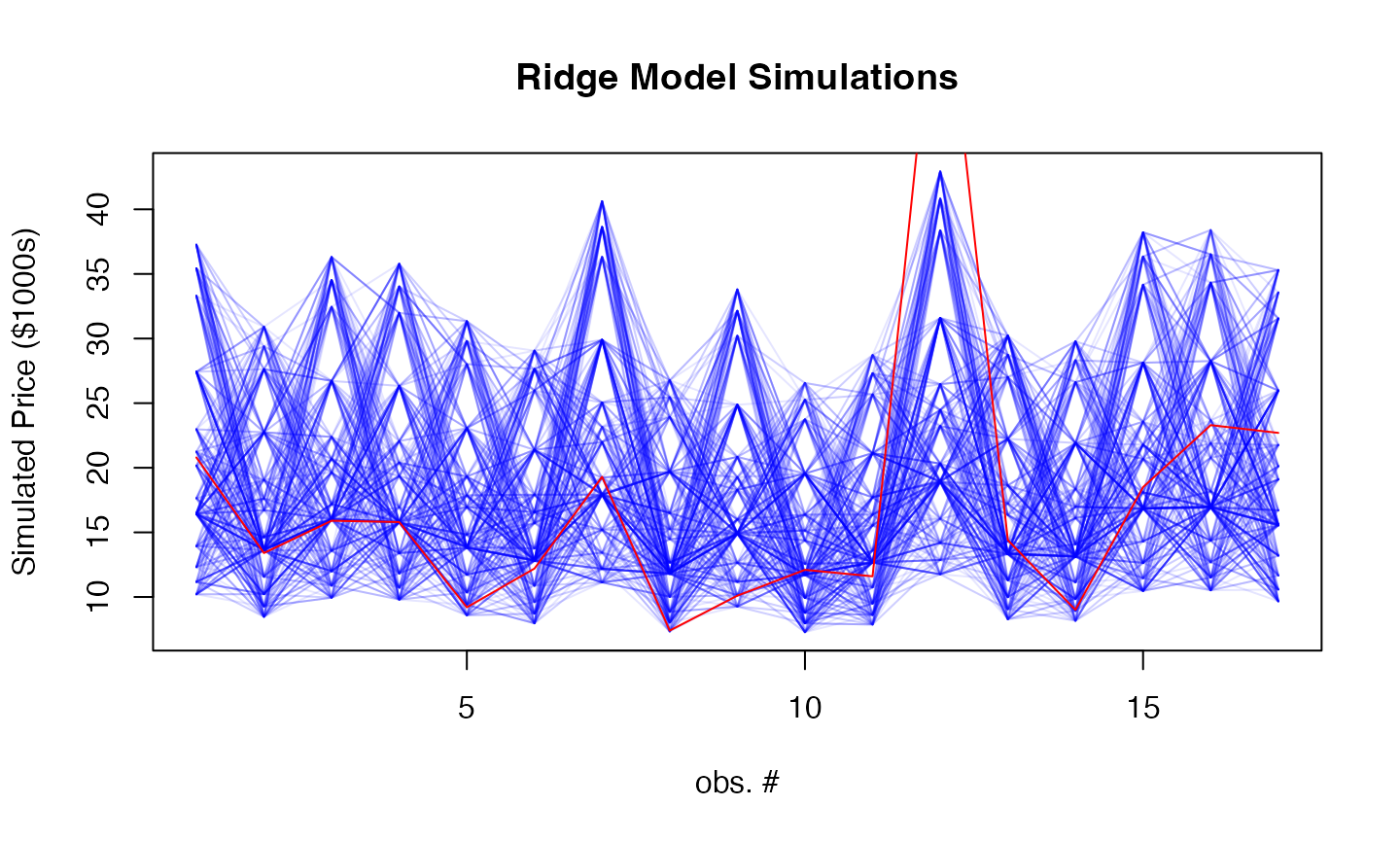

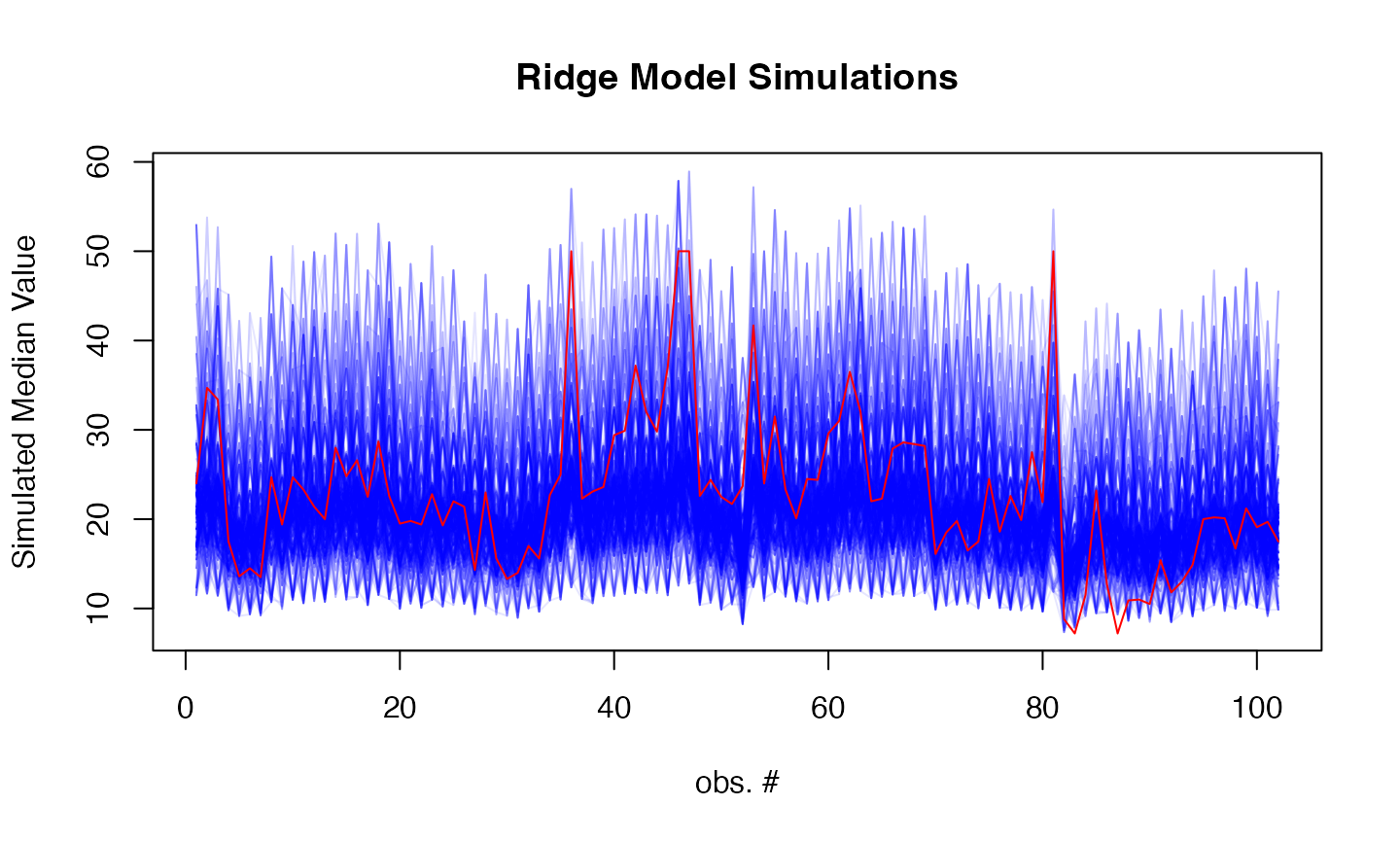

# Add simulation plot

par(mfrow=c(1,1))

sims <- simulate(ridge_model, newdata = as.matrix(test_data), nsim = 500, method="surrogate")

matplot(sims, type = "l",

col = rgb(0, 0, 1, 0.1), lty = 1,

xlab = "obs. #", ylab = "Simulated Price ($1000s)",

main = "Ridge Model Simulations")

lines(car_data[-train_idx, "Price"], col = "red")